As a long-time member of the agile software community, I’ve had a lot of time to think about how we used to do things and how we’re doing them now.

In doing this, I’ve come to realize that we’ve got a lot of mythology and misinformation about story points in particular, and so I invite you to join me in exploring the truth about story points (as I see it).

Relative Estimates

Back in the early 2000s we did “relative estimation” of user stories in units of story points. There is much written about the value of estimating in relative terms vs absolute terms, and we bought into that in a big way.

Even when people estimated in absolute terms of time, they would look at a project that they had previously done and use the time it had taken as a baseline for estimating the new work.

I didn’t originate the idea of story points, but I definitely was a practitioner. It was a confusing idea to me at the time (as no doubt Dave Chelimsky can remember explaining to me).

It is a simple concept, though, once you get past the initial obvious and naive misunderstandings of what they represent. I will be your guide to get past those naive misunderstandings and help you understand story points better.

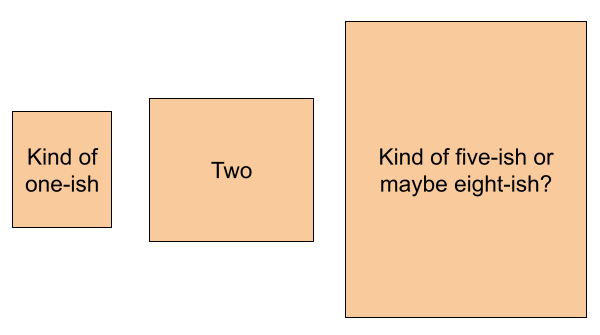

We used integers in the Fibonacci sequence, as is often still done today.

You would take some relatively small piece of work and call it a 2 (for instance).

If you have another bit of work that is only half as tough to do, you call that a 1. Maybe there weren’t other jobs much smaller than the 1, so we would call all our smallest jobs 1.

If you have some bigger piece of work, and it seems at least twice as bad as the one you called a 2, then it’s a 5. Fibonacci sequences don’t include 4, so 5 was the closest “twice as big as 2” number.

If you have some piece of work that is bigger than the 5, but not twice as bad, then it’s an 8.

Working through all your proposed user stories in this way, you would come up with some rough sizing for the stories. It would often be good enough.

Great. But what do you do with those sizes?

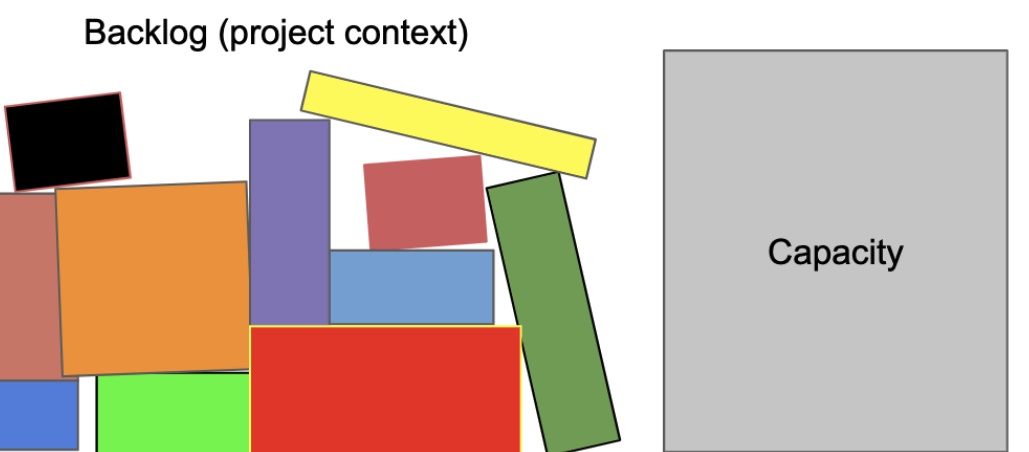

Planning By Capacity

We had a ceremony called the “planning game” and a protective measure called “yesterday’s weather.”

The idea is that we don’t want to take on more work than we can do, so we want to plan within our capacity to have a chance of completing the work we start. That’s a simple and humane idea, one which is much ignored these days.

We would meet with the business and say “we completed 23 points last week. Todd is going to take a vacation in the coming week, so we can only offer you 18 points this week.”

The business person would say “very well, if I only have 18 points, then I want these stories…” and they would list out roughly 18 points of stories for us to do.

We wouldn’t accept more work than we have proven we could complete in the previous week.

The amount we did last time was called “yesterday’s weather” based on an observation that today’s weather will most likely be just about like yesterday’s weather.

The team offered points, the business person (“the Customer”) would choose the number of stories whose estimates added up to the sum offered by the team, and off we would go. It seldom took ½ an hour.

As a simplification, we would assume that two 8-point stories is roughly equivalent to 8 two-point stories, but this turned out to be based on some flawed assumptions and it didn’t really pan out over time.

For the sake of quick planning, we used the “it all sums up the same” assumption, but usually if we saw a few really big stories we would recognize the risk and try to split the stories smaller or work out a different distribution of large and small stories.

What does this number mean?

Here is where we get into trouble.

People tend to believe that there is some constant, K, such that:

story points * K = actual hours.

This is naive.

It seems completely reasonable and yet is almost perfectly wrong. It assumes linearity where it as likely to be exponential as linear, and it assumes a precision and consistency which is not present.

But if it’s not a linear measure of time, then what is it that we’re measuring?

Re-examine the history of story points and XP above. Where was anything laid out and measured in hours, minutes, feet, cubic meters, etc?

There were no measurements made in any dimension, direction, or unit.

We named a story “2.” We called another story a “1” because it seemed less than a 2, and another story 5 because it seems more than a two.

Is it:

- More typing? No, we have no idea how much typing is involved. No programmer knows that.

- More code reading? No. We don’t know how much of the codebase we’ll have to study and research.

- More reading of supporting documentation? No. We can’t know that.

- More communication with stakeholders? How would we know what questions we might have in the future?

- More studying of the tech stack, language, frameworks, and libraries? Once again, we don’t know what we don’t know.

- More complexity? No, we don’t know how many variables, calculations, or logic branches we will require. We won’t know that until we’ve done the thing.

- More time? No, we don’t really know how long it takes, but it probably will fall (we hope) into the same range of times as other stories with roughly the same story point assignment.

Then what is it?

It is Muchness: all of the above and more.

The 5 feels like it may have more muchness than the 2, and the 1 feels like it has less muchness than the 2.

Some of the “muchness” was described in an earlier blog post in terms of risk, effort, and uncertainty, but muchness isn’t necessarily composed of only those things.

It may also be that the work will require more bureaucracy as it crosses departments and jurisdictions within the company.

Muchness is nebulous. It’s whatever one thinks might occur in the course of adding the feature (as they understand it) to the code base (as they understand it) to the best of their knowledge.

Since muchness is nebulous, story points are likewise nebulous.

Story points represent the expectation of muchness. Things may happen during design and coding that require far less or far more time, coordination, and effort than we anticipate.

Events like delays and interruptions can make it take longer even if we are accurate enough in gauging the muchness of a given task.

The stakeholder or PO or PM or Customer can usually decide to do less or more work.

If we have a grand design for a huge new vertical segment of our product community, and we must have it all before going to market, that’s going to be a lot. The muchness may be overwhelming.

If, on the other hand, we can start to work one problem at a time, or one data set at a time, then we can likely reduce the muchness of a delivery – and in doing so, shorten the time to delivery.

When we reduce how much work is to be done, we’ll see smaller story point numbers. This is a better direction than ruining the measurement by insisting on smaller story point numbers without changing the muchness of the work.

The less you are doing, the smaller the investment in doing it, and the quicker you can be done. This is also covered in another recommended blog post.

How much muchness per fortnight?

A significant problem with the whole world of story points is the fact that we named story points with numbers. That made it easier to compare. The muchness of a 5 is at least twice as much as a 2, etc.

But it’s not a time. It’s really an estimate band.

The stories are within band 1, band 2, band 3, band 5, band 8, etc to the best of our ability to approximate given what we know today. The story points are not numbers, they are names for groups of estimated jobs.

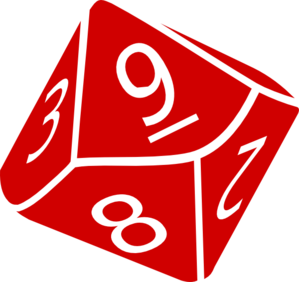

My favorite analogy is that story points are like dice. Let’s use a 10-sided die for example.

So a one-point story is like a single 10-side die (written 1d10 and read “one die 10”) of some nebulous unit of time (half-days, hours, weeks, it doesn’t matter for our exposition).

If I roll the die, it will display a number from 1 to 10. The die represents a chance that either of the values may appear, much as a story has a spread of possible durations.

The work (story) that is a 1d10 story may take only one nebulous unit, or it may take 10. One answer is about as likely as the next. We don’t know precisely, but it’s in the right order of magnitude.

Either way, we don’t know until we roll the dice – or in our case implement the story. Only history is certain.

Being in the right order of magnitude is workable.

So, in our example, a two-point story is like two 10-sided dice (2d10), yielding a value from 2 to 20 nebulous units of time. You can see that some one-point stories may take longer than some two-pointers. It’s hard to say. It depends on how much work turns out to be required.

If you look up the probability chart for 2d10 you will see that there is a pretty strong chance (28%) that the dice will roll 10, 11, or 12. That is probably the likely answer that the team ‘felt’ when they guessed it to be 2 story points instead of a 1 story point, but again we don’t know until we actually roll the dice … er, implement the feature.

The five-point story is like 5d10. It may be anywhere from 5 nebulous units to 50. Again we have to consult a probability chart to learn the more likely values for a 5d10 are around 28. But that’s dice, not actual stories. Story points are not exactly like dice.

Why are we using a ten-sided die? No reason. The analogy holds for 6-sided, 12-sided, or any other dice with more than two sides.

Why does it vary by team?

Teams do different work.

Because of that, the variability of the work is different.

You may find that one team is more like four-sided dice in their story points because the variability is low.

Other teams may be more like 6-sided, 12-sided, or 20-sided depending on the variability of their work.

Would you compare 10d6 (one teams’s load) to 6d20 (another team’s load) and claim that the 10d6 team is getting more done because there are more dice? No, clearly not.

The scale and variability of stories differ across teams because the work and the teams are different.

What if we divide time by points?

Fair question. Let’s say my team worked in story points (we don’t) and that we have some kind of sprint or time box (we don’t), and that in the last timebox we turned in 25 story points.

That means that one story point was 1/25th of a sprint. If the sprint is composed of 250 hours, then we could do the easy division and say a story point is 10 hours.

We can say that, just as we can say any number of things that are just as deeply and essentially wrong.

If you roll twenty ten-sided dice and get a sum of 100, that doesn’t mean that you can plan on ten-sided dice to roll 5s.

The number of dice times 5 will not give you a useful prediction of your next roll.

Each time we execute in the timebox, we are rolling some number of dice (metaphorically) and our actual values can land anywhere in the distribution.

The next run with 20 points (20d10) may come up with 200 or 20 or any number in-between.

How can that be? Because the story point “number” we assigned is an estimate band based on the perceived muchness of the user story.

Trying to lock story points to a constant amount of time is a fool’s errand.

I was told that a story point is a half-day of effort!

Yes, you were. I’m sorry you were told that.

It’s based on a misunderstanding of story points we’ve been trying to fix for 20 years.

It is wrong.

It is exactly the kind of wrong thing that people tell each other when they don’t really know how they are wrong, or why they are wrong.

If it helps, cf Dunning-Kruger.

How can we schedule with story points, then?

The short answer is that you can’t. They’re not reliable.

That’s not even what they’re for.

The point of story points is not to know how long something will take, but to help avoid overworking our teams.

We did 25 story points of work last time, so we will take on no more than 25 story points this time.

We might not even finish them all but it’s an order-of-magnitude approximation and was close enough, often enough.

We were able to keep a more sustainable pace using yesterday’s weather than before we started doing that.

… but what if I’m really clever?

If you are into statistics and probabilities there is a way. Bear with me here.

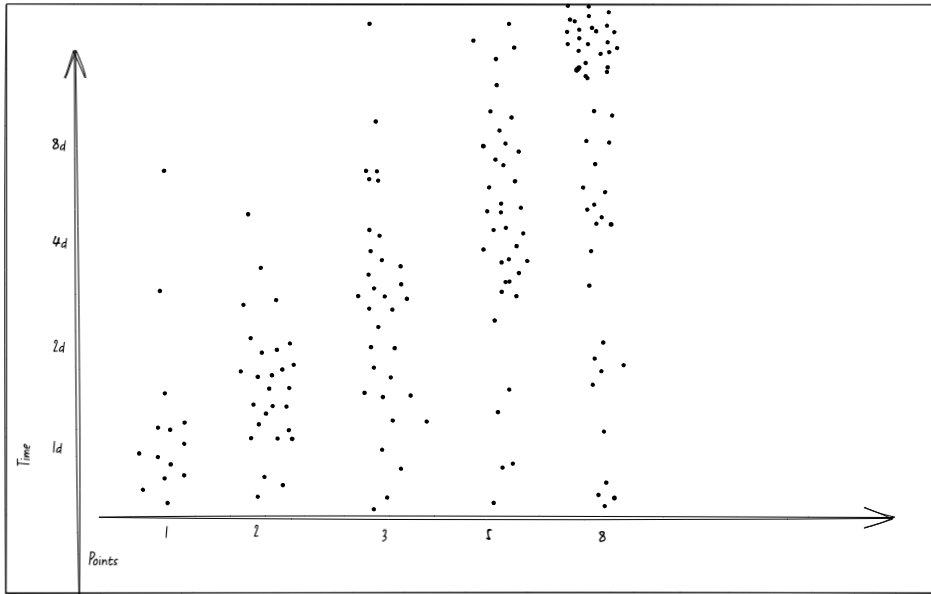

If you track actual hours to story points and present this on a scatter plot, you’ll see the range of values typical to a given story point assignment.

You might get something roughly similar to this:

Important Note: I fabricated the numbers in this graph. I have seen graphs of actual data, and the numbers and spreads vary by team. This is real-like data, but is by no means real data.

Once you have done the work over several months, you can see where the clusters are and start to recognize some likely values based on the story point estimate and the past performance of the group.

It seems likely in our graph that a one-point story will be done in a day, give or take half a day. There are outliers, of course, but there is a cluster.

Two-point stories look like they’ll be roughly two days in the graph above, with some outliers.

Three-point stories are harder to say, but most of the time each is done within 4 days, plus or minus a few days.

Five-point stories … that’s harder to say, but likely within 5 or 6 days, rarely fewer than 2.

Eight-point stories are likely to take a while. Maybe we shouldn’t have any stories this big?

Given the ranges, means, and standard deviations, you can devise a Monte Carlo simulation and calculate the duration with an 85% or higher likelihood of success. If you need better than 85%, try 90% or 95%.

If you really have to be sure, then use the highest recorded duration of each band. That is like assuming that every 10-sided die will roll a 10 every time. It’s super-pessimistic, so it’s likely to be super-safe.

It’s a fair amount of work, and you’re just hoping that not too many of the high-value outliers occur, but it seems reasonably possible to predict.

Of course, the graph will keep changing, and the team will have to keep accurate track of hours, so there are ongoing costs to doing this.

It’s a little complicated but it’s not impossible.

Certainly, it isn’t as easy as the naive decision to multiply story points by some constant.

It might be simpler if all the stories were intentionally built to be a 2. Then you could count stories and multiply by the mean value for the band plus the standard deviation, and that would be “pretty good” (probably).

You may have hoped that story points would keep the math simple, but they clearly aren’t doing that.

Okay, how can we make the math simpler?

First off, don’t use story points. They’re too complicated, unreliable, and uncertain.

We flex our scope in order to meet our deadlines.

You have a consistent monthly burn rate (salaries and services for your teams) and you want to release something in 4 months. Great. Let’s start there.

We have a wild guess of what we hope to release in 4 months. We’re not sure it’s possible to get all the work done, but we decide to do it because it’s important, or we invest in finding out how hard it will be at least.

We strip down our expectations and figure out what is the least we could possibly build that would be at all useful and meaningful, and we shoot for that as a first milepost.

We divide it into small jobs, maybe using story mapping, maybe just listing capabilities and ordering them.

We will evolve a design that does what we need.

We build a bit of it, probably for proof of concept at first, so that it runs end-to-end. We tick that off the list.

We can’t tolerate code that doesn’t run. We have to have runnable, testable code all the time so that we measure and show actual progress.

We measure the rate that we’re burning through the list.

If we are not going to get it all done, we look for ways to do less. We cut some things, we thin some things out. If we’re moving quickly, we might add on some more stuff to do.

The working code is our measure of progress. We look at what it actually does now vs what we want it to do.

Over time, we get a sense of how many features/stories/behaviors we’re adding per week.

We order the list to be sure the most crucial things are done first.

This way when time runs out we’ll only be cutting the nice-to-haves or the most deferrable remaining items.

We don’t need story points or timeboxes to do this.

We don’t need to track hours worked anymore, or work out distribution curves, or run Monte Carlo simulations.

We can just work, and also keep looking for ways to save time/money by making features leaner.

WAIT! Not use them?

Right.

There’s no point in putting yourself through the hassle of tracking, calculating, simulating, etc. if you don’t have to.

You can stop using story points.

You probably don’t need velocity or time boxes or any of that.

You’ll likely be more agile without them.

Collaboration, working software, scope flex… you can probably do it with just those, well, depending on what the people above you in the hierarchy agree to.

If you are commanded by people above you on the org chart to do so, you can use points and simulations and hour tracking. I wouldn’t, but I’m not you.

Just don’t go telling people that it was my idea or that I told you to do it – I didn’t.

Related Discussion:

We had an open discussion through our Industrial Logic TwitterSpace. Here is the recording of that conversation.