It is common for the measurement of unit test coverage to contribute to the continuation of poor development practices. Often when teams try to improve their code coverage, they unwittingly create more problematic code that continues to be a drag on them and their organization. Increasing code coverage without improving development practices will not lead to sustainable improvements in software quality.

Coverage is a Result, Not a Goal

The crux of the problem is that high code coverage results from quality-first development practices. Naturally, organizations with low code coverage are missing these practices. Instead of dedicating time and effort to improving development practices, a focus on increasing code coverage misconstrues the result as the goal.

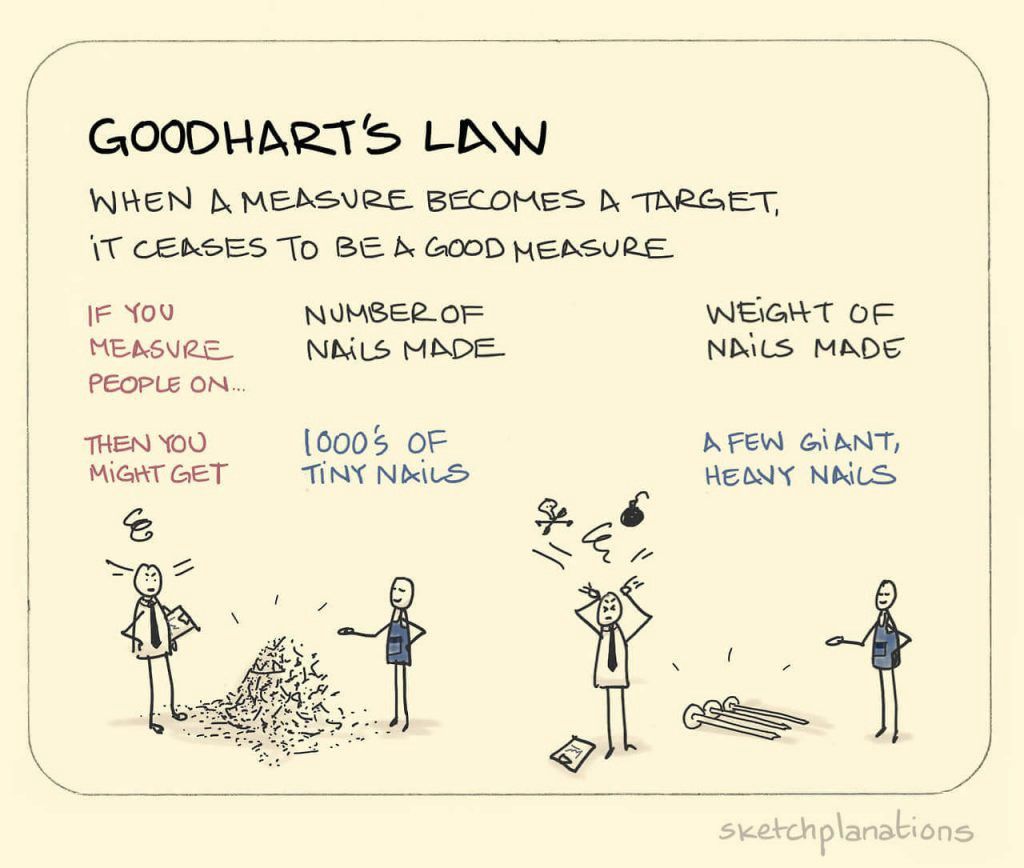

Goodhart’s Law

Coverage goals are often a textbook example of Goodhart’s Law. The law states that “when a measure becomes a target, it ceases to be a good measure.” Absent an outcome-based goal, pursuing higher code coverage will only increase suffering in the codebase.

When the focus is on the metric instead of the goal, it's a problem. Dominica DeGrandis - Making Work Visible

The Coverage Proxy Metric

Code coverage is considered a proxy metric for the software quality or the ability to find issues before they get to production. Ostensibly, codebases with high code coverage are easier to change and help prevent defects from escaping into production. That is not the case for organizations that try to achieve high code coverage alone.

Test Quality

Code coverage metrics cannot measure the quality of the tests. Low-quality tests can increase the code coverage without testing anything. The tests can be so hard to understand that they are a maintenance nightmare. Pursuing high code coverage without improving the system design or development practices is a recipe for creating more problematic code.

Achieving a Coverage Percentage

Code coverage cannot assess the importance of the tested code. Development teams trying to achieve a coverage percentage without learning new practices will look to get those percentage gains in the safest and fastest way possible, resulting in tests that are either not valuable or much too complicated and brittle.

Improving daily work is even more important than doing daily work. Gene Kim - The DevOps Handbook

In the absence of practices like TDD, refactoring, and legacy code techniques, teams will prefer tests that do not require a significant modification to the production code. Commonly resulting in:

- Simple tests that sidestep the riskiest areas of the codebase

- Brittle and flaky tests that suffer from false failures

- Tests that execute code without any verification

You can't write good tests for bad code. Unknown

Valuable Tests Achieve Lower Coverage Individually

The industry has adopted the term microtests to describe the attributes of the most valuable types of tests. The term microtest differentiates these tests (often a result of practicing TDD) from the vast majority of unit tests.

High-quality microtests are micro in size, run in microseconds, and test a micro-behavior. They are fast and cheap, and you can run the tests thousands of times without false negatives. The sheer number of these tests contributes to the high test coverage.

Writing Tests Without Business Value Increase Risk

When organizations have a code coverage goal, it often results in projects to write tests for areas of the code that are separate from the business value they are currently delivering. This introduces an avoidable risk for organizations. My recommendation is to build improvement habits by practicing them every day. Using a technique Martin Fowler calls Opportunistic Refactoring, developers improve the code that they need to change when they need to change it. The code that changes most often gets the most improvement. This same approach can be employed when improving code coverage. Write tests for untested code when it needs to be changed.

Writing high-quality tests for existing code requires refactoring to make the code testable (the Legacy Code Dilemma). When the creation of tests is separate from functional system changes, it incurs risk for the organization for no benefit. Code that is not changing does not need tests until it is modified. Creating backlog items and projects for writing tests won’t help development teams build the skills required for all code changes to come with microtests and improvements.

Benefits of Code Coverage

There are specific cases where measuring code coverage can be valuable. One such example is a team-level measure to track the progress of getting a legacy system under test.

Improving a legacy system feels like an overwhelming task at times. Teams rely on legacy code techniques to safely and incrementally improve the quality of the code as they are making functional changes. It is common for a team to feel like it would be best to rewrite the system from scratch. Tracking coverage can be a great morale booster for development teams to visualize their progress.

CRAP Metric

Static code analysis tools like NDepend or SonarQube use code coverage data for metrics they provide. One such metric is the CRAP metric. The appropriately named acronym stands for Change Risk Anti-Patterns. It measures the risk associated with changing an area of code. By scoring code based on its (lack of) code coverage and cyclomatic complexity, it can provide insights into the riskiest areas of a codebase to change.

Code coverage can be a valuable internal measure for a team. It loses value when it is imposed on them by their organization.

Recommendations

Software organizations should stop focusing on code coverage as a goal. Instead, focus on improving development practices like:

- Test-Driven Development

- Refactoring skills

- Legacy code techniques

- Software design skills

Encourage developers to learn and work together, adopting collaborative development practices like pair and mob programming. Leaders should be creating a learning environment where teams feel safe to take time to learn these skills. The result will be a significant quality improvement and thus an improvement in effectiveness, morale, and yes code coverage.

Related Discussion:

We had an open discussion through our Industrial Logic TwitterSpace. Here is the recording of that conversation.