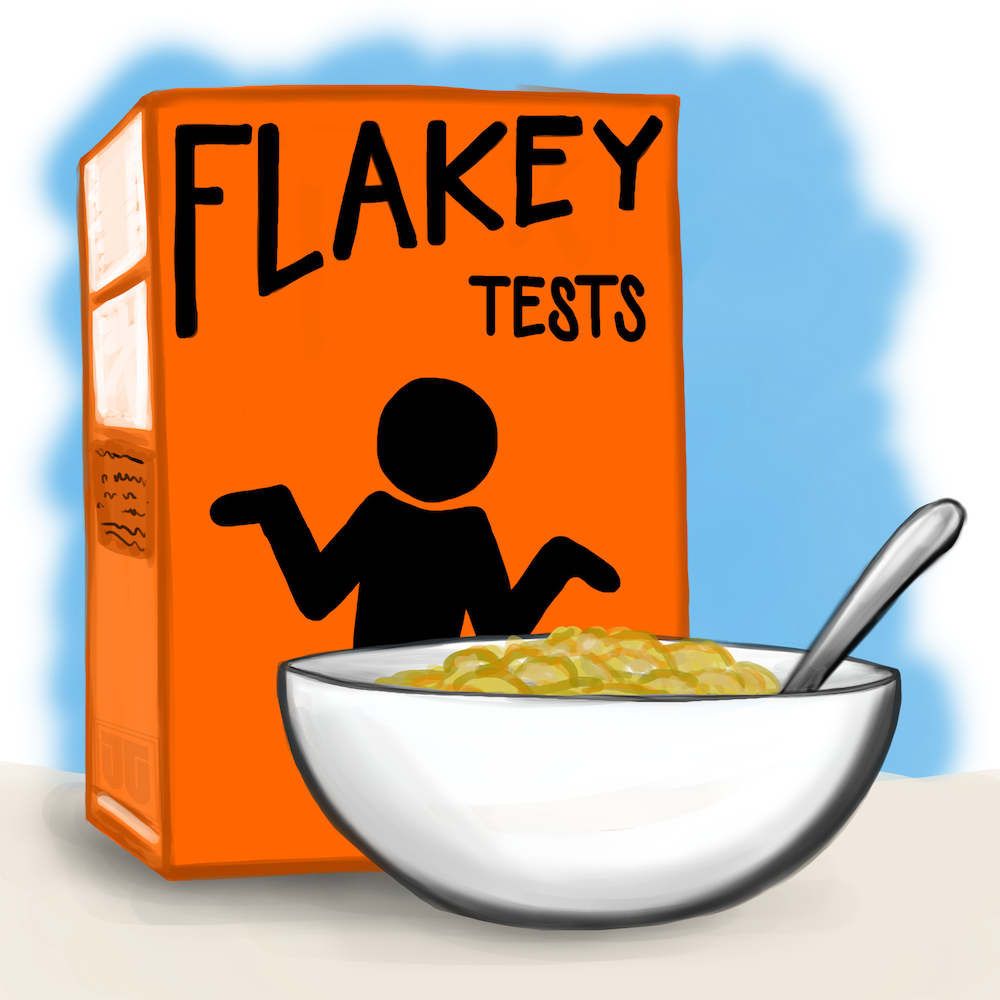

Flakey tests are the tests that work sometimes.

The same tests periodically fail or report errors. They have intermittent failures that sometimes seem random.

Sometimes if you rerun the flakey test, it’s fine. It runs and passes again even though you changed nothing.

Sometimes it will run fine alone but fails when run with all the other tests.

Or maybe some tests only run and pass when they’re run in a group and can’t be trusted when run alone.

What do you do?

- Rerun it and hope for the best?

- Delete the test?

- Mark it ‘ignored’ so it’s skipped?

- Get used to it failing?

These are emotional coping strategies. Emotional coping is an important skill, crucial when one is in a situation over which one does not have power (loss & grieving, difficult financial or social situations, etc.). I don’t want to paint emotional coping as a bad idea. Everyone needs to know how to persevere, endure, cope, adapt, and overcome.

The problem that I want to call out here is the assumption that one is powerless, and so they end up coping with a problem rather than solving it.

People conditioned to tolerate spurious test failures are likely to ignore significant, real failures. If “some tests always fail,” how carefully do we examine them to be sure it’s only the bad ones failing?

Once we learn to ignore the tests, we have negated the value of having tests.

Flakey tests are not something inflicted upon humanity by a cruel and impersonal universe.

We are not helpless and don’t have to tolerate this kind of technical problem. We can overcome this.

The “right” answer (that promotes good habits, eliminates a source of frustration and distrust, and empowers the organization to move forward) is to diagnose and fix the tests.

To aid you in “doing the right thing,” I am providing a list of reasons that tests become flaky or maintenance heavy.

Over-specifying Results

Tests are over-specifying when the tests look at too much of a JSON document, string, XML, DOM, or an HTML document being produced.

It is fairly easy (and sadly common) to write a test in such a way that it fails in the face of any minor adjustment or change in structure, phrasing, capitalization, positioning, or behavior, even if those changes have no real effect on correctness of the unit being tested.

Rather than look for specific evidence of an action, the assertions compare whole strings or whole documents where any number of things may have (correctly, harmlessly) changed or may vary for good reasons.

This is why some tests require a lot of maintenance.

Many xpath or CSS queries are highly specific and long, specifying a great deal of the document’s structure that may be non-essential to the test’s specific aim.

In another classic case, a test expects the very upper-left corner of a UI control to be at a given pixel position. Is it essential that the control is at 800,200 and not at 789,201? Probably not, but over-specification in UI tests (especially record & playback tests) is quite common.

In each of these cases, a more specific assertion creates a more reliable outcome. This is a test-writing application of the Robustness Principle: be conservative in what you do, and liberal in what you accept from others. In this case, allow the actual data you receive to be different as long as it is still correct.

Rather than comparing a whole JSON document or an entire web page, why not look for the values you want in the placement you expect?

Maybe don’t check that the last line of the document says exactly, “Your total is $23.02!” Instead, why not check that the total line, wherever it is, contains “$23.02”?

Precision in assertions pays off in lowered maintenance costs and reliable tests.

Often tests are hard to maintain because they’re too structure-aware so that any change to the design causes many tests to fail.

x.container.contents.getItem(0).getEntity().getName().toLowerCase();This solution is not difficult (though a little tedious): apply the Law of Demeter.

It pays off by making code and tests structure-shy and can make it easier and safer to apply mocks – if you’re into that kind of thing.

Cross-Contamination

When tests must be run in a specific order, it is because the tests lack isolation.

Cross-contamination has occurred when test B cannot tolerate changes made by test A: whenever B runs after A, B crashes.

It is also present when test B only passes if it is run after A.

Cross-contamination leaves tests with implicit and often unintended dependencies. Since test A doesn’t declare that it’s setting up for test B, and B doesn’t declare that it needs A to run first, it is easy to make a small change to one test that causes others to fail. A person who is modifying test A has no warning that they may affect test B.

Transitive dependencies can make cross-contamination a nightmare.

Imagine a set of tests named A through K (that’s terrible test naming, but bear with me).

Furthermore, imagine that test J only runs if test A is run, test D runs later, then test F, but not test K.

Tests B, C, E, G, and H are completely inconsequential.

In order to fix the cross-contamination, you have to figure out which tests, in which order, affect which other tests and in what ways.

I’ve seen some organizations cope by using rather odd naming conventions after learning that their test runner executes tests in alphabetical order. They have tests named AAB_TestSomething and BC_TestMe so that they will sort in an order that doesn’t cause failures.

Ordering the tests is a reasonable experiment to explore cross-contamination, but it’s not a solution. Until the contamination is isolated and eliminated, tests are still relying on leftovers from other tests.

Test frameworks always provide some means of performing setup and teardown. Using the appropriate setup and teardown, tests can remain isolated and self-contained.

As a helpful feature, many test runners will execute the tests in random order to expose cross-contamination. These test runners report the random seed that was used so that people can investigate the failing tests.

Fix the contamination problem, and you’ve got easy test repeatability.

Relying on Global State

What do you do when some tests run on some machines (say, yours and the staging test environment) but not on other environments (your colleagues or production)?

Does the test rely upon:

- some global setting or environment variable?

- the existence (or non-existence) of a file or directory?

- reachability to a given IP address?

- certain values pre-populated in a database?

- credentials or permissions?

- a particular user account (possibly

root)? - non-existence of a given record, env variable, file, etc.?

Microtests have the luxury of testing highly isolated paths through specific functions, but larger tests (often called integration, system, or acceptance tests) may require that a system is more fully provisioned and prepared than is specified in the setup for microtests.

Whatever the test requires, it must also provide.

Often global setup problems are solved with a good test setup routine.

Sometimes the problem is solved in automated system provisioning scripts (Dockerfiles, etc.).

Relying Upon the Unreliable

It may be that the test depends on inherently unreliable qualities of the system.

- Random values

- Ordering in unordered structures

- Timing

- Date/Time

- Database quiescence

Often tests were written expecting that N milliseconds after a request was posted, the request will have been fulfilled, and all necessary processing will be done by the system.

If the purpose of the test is to ensure that the machine meets specific deadlines, this is fine and intentional.

The problem is when the timing isn’t the purpose of the test. By using a specifically-timed wait, this test has become dependent on the load of the current machine.

A busy system may be slow to respond, or an idle task may take time to activate.

If the program being tested is evolving, then the “N” in “N milliseconds” is still likely to change.

People will often compensate by making longer and longer sleep() calls, but there is no “perfect” number of milliseconds to wait.

A sleep() call has the unfortunate effect of increasing the minimum time that it takes to run the whole test suite.

People avoid running slow test suites. If the tests are slow enough, nobody will use them.

One of the most common problems we see is when people don’t lock down their test environment. They run the tests in the same processor and database with other people doing manual tests or grooming data, and this results in collisions, such that record counts and record contents may vary.

The formula for reliability is deceptively simple:

Environment + Input + Algorithm = Output

If you don’t have a locked-down, controlled, test-specific environment (and content), you will have different outputs for the same algorithms so that your tests will not be reliable.

Consider running your tests in a pristine environment created specifically for the purpose of testing, with a configuration and a data set created and curated to be consistent and reliable and no other users active in the system.

You may have to spend a bit more time researching and locking down the environment, but it’s easily worth the time. People tend to use Docker or similar container-based technology to create well-controlled test environments in this modern era.

If you can’t have an isolated system, you will have to work hard to ensure that no cross-contamination or changes in load will affect your tests.

Mishandling Date/Time

This is a specific case that falls under the category of “Relying Upon the Unreliable,” but time is such a difficult problem that it rates a category of its own.

We often come across tests that are fine unless you run them at a time that crosses a minute, hour, day, or year boundary. Sometimes the boundary isn’t even in the current time zone - such as when UTC crosses a date boundary 6 hours before the Chicago-based server would have.

A friend of mine recently spotted (and called out) code like this:

int year = Datetime.Now().getYear();

int month = Datetime.Now().getMonth();

int day = Datetime.Now().getDayOfMonth();

int hour = Datetime.Now().getHour();

int minute = Datetime.Now().getMinute();I’m betting you can come up with quite a few edge cases in which this code will come up with surprising results.

Sometimes tests are ok, except at the moment we switch into or out of Daylight Savings Time. The idea that time can skip an hour forward or backward is often not considered by a test writer.

One of the first things that many experienced test developers will do is to “mock the clock” – to put a fake, settable clock in place of the actual system clock so that they can control timing.

To develop an appreciation for the difficulties of dealing with time, please review the list of falsehoods developers believe about time.

Good Test, Flakey Program

Your problem may not be the tests at all; you may have consistent tests and intermittently failing code.

Concurrency issues may present as unreliable tests. An update anomaly may cause a calculation to go awry, or a race condition may cause a liveness issue that results in a timeout.

Some series of actions happen out of the order you expected, and as a result, some list is ordered differently than expected (see the “over-specification” section above).

Perhaps a numeric value overflows the data type it is assigned to, and you just happened to hit the overflow boundary.

Maybe the application mishandles dates and times, and you happened to hit a boundary (see above).

Maybe you’re using a network that has more or less latency than usual.

There are many ways that the actual application code could be sensitive to environmental issues or concurrency that might cause it to fail a test. When you’re looking at a flakey test, remember that you may be actually looking at a flakey application.

It’s always annoying to have a flakey test, but in this case, ignoring it could be dangerous.

TL;DR

Here is the list without the jabber:

- Over-specifying Results

- Cross-contamination

- Relying on Global State

- Relying on the Unreliable

- Mishandling Time/Date

- Good Test, Flakey Program