My colleagues and I recently developed and deployed our first Feature Fake, a fast, frugal way to learn whether users are interested in a feature before actually building it.

I learned this excellent technique from Laura Klein, a Lean UX guru from UsersKnow, who was one of the distinguished mentors at the recent Lean Startup Machine in San Francisco.

Laura plans to write a detailed blog about Feature Fakes, but until that happens, I can’t help sharing my excitement about this technique.

Group Chat Anyone?

At the recent Startup Lessons Learned conference, Brad Smith of Intuit told a great story about how Quicken users were helping each other via their product’s Live Chat feature.

After the conference, I mentioned this story to my colleague, John Tangney, who quickly responded, “Yes, I love that feature! I used it the other day and someone in the chat helped me with a tax question.”

I had visions of creating a similar feature in our Agile eLearning, yet I wanted to know whether our users would be interested in such a feature before investing in it.

Within three hours, John and I had developed and deployed a fake Group Chat feature on our site, along with a report to help us measure user interest.

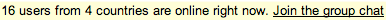

Our fake, which sits at the top of every eLearning page users see, looks like this:

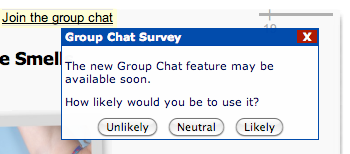

When a user clicks “Join the group chat,” they see this little survey popup:

Users can close the popup or choose to answer our survey.

Here are statistics after 100 unique users saw the fake:

| Unique Logins with Feature Present | User who tried the Feature | Users who Took Survey | Users who clicked "Unlikely" | Users who clicked "Neutral" | Users who clicked "Likely" | 100 | 22 (22%) | 14 | 1 (7%) | 7 (50%) | 6 (43%) |

|---|

More Experimentation Required

Our fake was live for 3 business days, during which 100 unique users saw it.

I did not find the data to be compelling proof that we ought to build the real group chat feature.

I do wish we had done a proper A/B test of the fake feature so that we could’ve learned how two, differently-worded versions of the fake would’ve impacted the statistics.

Since we did not do that, we may run another experiment with a different Group Chat message and write some code to ensure that people who saw our previous fake will not see the new one.

Fear of Fakes

When I first heard about Feature Fakes, I was a bit worried that if we produced too many of them, our users would eventually tire of these phantom features and be afraid to click.

So I raised my concern to Laura Klein, who said:

In my experience, feature fakes do have to be used carefully. For example, I wouldn’t have several going at once, and I wouldn’t leave them in place for very long - just long enough to get the information you need to make a decision.

Remember, if a lot of users are clicking on the feature, that means they’re excited about it, and you should probably build it pretty quickly. If very few users are clicking on it, you’re not going to disappoint that many users when you don’t build it. If you stick a stub out there for a few days, get great feedback on it, and then prioritize building the feature immediately, people may actually be more excited once it gets built because they’ve been anticipating it.

Lessons Learned

Feature Fakes are:

- an excellent technique from the Lean UX community (thanks to Laura Klein for introducing them!)

- an invitation to your users to try a feature

- a fast way to validate interest or non-interest in a feature

- not meant to live for very long

- not proof that the feature itself will be popular (you’re only testing the fake version)

I’m delighted with the speed at which we learned from our Group Chat feature fake and I definitely see us using this fast, frugal user-research technique in the future.

I’m also looking forward to learning more about this technique from Laura herself.