Test-Driven Development (TDD) is frequently misunderstood in ways that cause needless struggle, delay, and upset.

Misunderstanding and misrepresentation have been painful enough that developers have cried out in frustration, sometimes declaring the whole practice harmful, pointless, or even “dead.”

Perhaps we can help people come to a more healthy and productive understanding of this important practice.

What is it?

Mechanically, TDD is very simple.

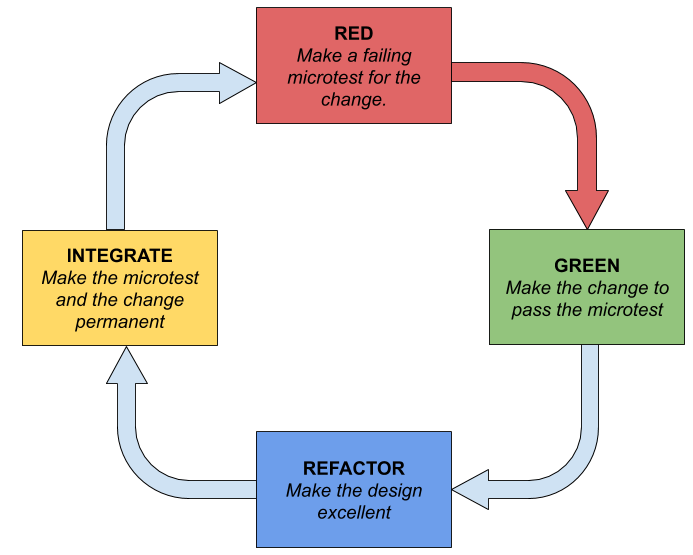

- You write a (micro-)test that fails because the code does not implement the behavior expected by the test.

- You immediately write code that causes the test to pass. Note the red arrow above. When there is any failing test, the programmers’ only job is to make all the tests pass, even if doing so means reverting a number of changes.

- Emboldened by having proof that the code fulfills its intended function so far, you revise the code and test so that new functionality gracefully fits into the code in a clear, intentional, obvious way.

- Now that the code is working at a fine granularity and all tests are passing, you commit the code to the source control system and retrieves changes made by other developers. All tests still pass.

Because TDD is a simple four-step process, most people underestimated the skill and technique required:

- Writing tests that support refactoring is a skill involving several techniques.

- Writing only the code that is needed to pass the test requires considerable restraint.

- Refactoring requires a number of technical skills and a degree of awareness of code structure to keep the tests passing at all times while changing the structure of the code.

- Integrating continuously with other developers involves a kind of social contract. We all depend on other people’s code being well-written and all tests passing.

The Intention of TDD

TDD is programming hygiene. It keeps the code orderly while supporting incremental and iterative development with continuous integration.

The word orderly here suggests that there is a lack of clutter, confusion, and mess.

We could describe orderly code as code that is free from code smells, and would not be entirely wrong.

One can make significant progress from learning code smells and how to remedy them. Our Code Smells album and our free code-smells-to-refactorings cheat sheet can help set your feet on a good path.

Focusing only on code smells will not quite bring a programmer to understand writing orderly code. There are other skills required as well.

We might prefer to describe orderly code as code that is easy to understand and modify; code which is malleable and does not impede further development.

In a future blog post, we can discuss the combination of ideas around good code organization and signal-to-noise ratio in source code. We have other ground to cover here.

Incremental development is adding features or behaviors to code a bit at a time, rather than trying to complete all defined functionality and fit all constraints in a single pass or session.

Every time we add a feature, we are asking the team to do incremental development. In Agile teams, we plan to produce increments as frequently as possible.

Iterative development is to revisit existing code and rework or elaborate it as necessary to improve the design of the whole.

These revisits are not the waste known as rework, but rather a hygienic reduction of waste. We take what we have learned and apply it so that the code (to quote Ward Cunningham) “looks like we knew what we were doing, to begin with” considering all the features that have been added or elaborated and domain knowledge gained since the first version was written.

When I refer to Continuous Integration I mean that software changes are shared with other developers as soon as possible: many times per day if possible.

To share code with other developers, then it needs to be “safe” and “done” (for small values of “done”) many times a day. That requires a much more incremental approach and the safety provided by having fast tests.

All of these behaviors would be risky unless there is some kind of practice (work hygiene) that would make it safe to revisit, revise, add functionality, and share code frequently with confidence.

This is why we do TDD. It creates safety for these developer’s behaviors.

TDD is Testing?

Some people think that TDD is a testing practice to be performed by testers.

TDD is a software development work hygiene used by software developers to enable_ successful refactoring and continuous integration_.

There are a number of example-guided test disciplines, and all of these are good things used-as-intended. ATDD and BDD may be best performed with testers left-shifted into requirements and design practices. The example-guided practices that support refactoring tend to be the most granular: microtesting, unit testing, and sometimes story-testing.

As an oversimplification, consider that testing is an activity that tries to assess or ensure the suitability of a product for use. If that is testing, then TDD is not testing.

TDD doesn’t prove that a system works, or that functions are clearly fulfilled. TDD exists primarily to create the conditions for refactoring. The fact that it uses tests (microtests) for this does not make it a testing practice.

The goal of TDD is to create the circumstances for quick refactoring, and most of the higher-level tests are just too slow-running to be useful for this purpose.

The TDD cycle is fast. If we can’t complete the cycle at least a dozen times an hour, then we end up compromising the speed of our work until we no longer feel we can afford to use the TDD cycle. We either do it quickly or (eventually) not at all.

UI-level testing is good, but you have to stand up an instance of the application and all of its supporting services in order to run UI-level tests, and these tests have to wait for screen or page refreshes. This takes a long time and can’t be done 6-12 times per hour by developers while maintaining their productivity.

Story tests and system tests are also good things that require too much setup and support to be done every 2 minutes or after every 2nd or 3rd line of code.

So, no, TDD isn’t something that testers can do after the program is written, and it is not suitable for the purposes of testers. It might reduce the number of defects found by testers, though, letting them focus on larger issue.

TDD is writing unit tests?

Some people believe that the purpose of TDD is to create unit tests, and of course, the density of unit tests can be measured in code coverage percentages. This is false.

There are many ways to create unit tests and to increase test coverage in source code, and only one of those ways is TDD.

A person could certainly get very good test coverage and generate a number of great unit tests by writing a little bit of code and then writing unit tests that cover all the paths through that code. Those tests could be written after the code is created. Why not?

In fact, one might be more efficient – writing exactly the set of tests that are needed to validate the code – by writing tests after the code is finished. I have found myself finishing code via TDD, and then adding tests to cover circumstances that I’d not considered during TDD.

But the purpose of TDD is not to create unit tests and increase test coverage. While this happens, it is a side-effect.

While having unit tests is a good thing (and even higher level tests a great thing) and can a team have fewer escaped defects, the real goal here is to create the circumstances for refactoring code quickly.

TDD is All You Need?

Some people argue that TDD is not useful because it is not sufficient to prove that a system works. It seems to be helpful in ensuring small parts work, but it does little (or nothing) to prove that the assembled system operates correctly.

These people are right. It is not the purpose of TDD to do so.

But if we use this logic, we can also say that antibiotics are useless because they don’t cure fibromyalgia. Similarly, touring automobiles are worthless because you can only drive them where there are roads.

Arguing that TDD not wholly sufficient for software quality misses the point. The point is that TDD (when done well) is easily sufficient to its purpose, but that purpose is not the proving of system sufficiency or correctness.

If you want to be sure your system works well, you need testing. That is different from TDD because it has different purposes and dynamics.

See, above, the discussion that TDD is not testing.

TDD Doesn’t Force Good Design

Okay. This is fair enough as assertions go, but forcing good design is not the purpose of TDD.

If one does a good job with TDD then the design can be modified with quick feedback. Iterative and Incremental work is easily possible, and a poor design can be refactored into a much better design in short order.

TDD doesn’t do the design for you, but it provides many opportunities for you to improve your design.

In fact, every loop through the TDD cycle has an explicitly provided time for refactoring. If we don’t take advantage of that moment, then we’re likely to end up with code that needs to be refactored and hasn’t been.

One doesn’t automatically become a refactoring wizard just because they have started using the TDD loop, but the TDD process gives opportunities to learn these skills (which are also taught in the Refactoring album).

Bad Tests Impede Refactoring

Again, full agreement here.

Having bad tests is sometimes worse than having no tests.

If looping through the TDD cycle mindlessly would ensure that all tests and all code were perfect that would be awesome. I would be happy to throw the TDD diagram in front of green programmers and walk away.

But TDD is a set of skills and attitudes. These skills and attitudes have to be developed through practice and information.

Most of us who do TDD had to study these skills and techniques for some time. They’re not obvious and easy. We’ve even split into different camps about how TDD should be done.

We have documented so-called “antipatterns” and failure modes for TDD.

But each spin through the TDD cycle includes a Refactoring phase, in which people can refactor their tests and designs so that they don’t have to tolerate horrible tests with long setup routines and fragile timings.

And, besides, TDD isn’t about ‘having tests’ but about doing what is necessary to enable rapid and easy code changes and additions.

If your test offends you, cut it out. The tests should be small, cheap, fast, and easily discarded. Learning how to write microtests is one of the techniques one needs to learn; a technique taught in our Microtesting album.

One critic on twitter suggested that TDD relies on mocking and faking to the degree that one cannot safely refactor code without having dozens (possibly hundreds) of tests to rewrite. If this is the case, we can recommend that one learn the technique of using fakes and mocks to support refactoring in the way that TDD requires. This technique can be learned from a variety of sources. Of course, we teach it in our Faking and Mocking album.

Why All The Bad Press Out There?

The bad press is mostly from people who have legitimately had a hard time. They take to blogs and social media to describe how they struggled and why they gave up doing the hard thing that bothered them. They warn others, saving them from the frustrating waste of time.

This is a behavior I agree with. I would rather people not do TDD than to do TDD badly or for reasons it is not intended. Doing terrible TDD and expecting benefits it does not provide? Sounds awful.

There are plenty of great resources for learning to do TDD well. We have many blog posts. There are some great books that teach TDD; books written by experts like Kent Beck, Jeff Langr, James Grenning, and others. You will be able to find many videos on youtube or online training companies.

In addition, Industrial Logic has collected a lot of our best learning (including the above-mentioned albums) into a box set called “The Testing And Refactoring Box Set” which includes help for working with legacy code. We also leverage these resources in our live Testing and Refactoring Workshops.

TDD done well should support refactoring, which enables incremental and iterative development with continuous integration. If what you’re doing doesn’t enable and support these ways of working, then please consider studying the techniques that make it work rather than blaming yourself or your version of the process.

TDD is a work hygiene. It is an umbrella practice that involves a number of techniques that have to be learned. Invest in doing it well, and it can pay dividends.

Special thanks to early reviewers Jesus Vega, Josh Kerievsky, Mike Rieser, Jeff Langr, John Borys, Jenny Tarwater.