It’s great to have reliable tests in your pipeline to avoid escaped defects and to shorten the feedback loop for your programmers.

Sometimes the build-and-test processes becomes a productivity-limiting problem. What do you do when your test suite takes too long to run?

Test As Soon As Possible

Around 2010, I worked on a team that had about 10,000 microtests running in 45 seconds.

late note:

In 2023 with modern software and tools, It has been reported that some teams are running 50K microtests in 10 seconds.

It comes out to be about five-thousandths of a second (.005 seconds) per test. Those tests are pretty “micro.”

But why does it matter? Why should people care about automated testing speed?

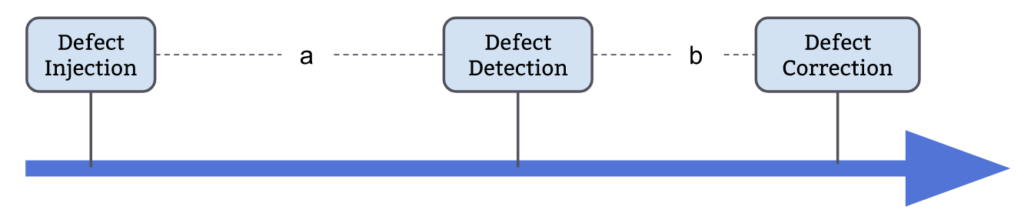

Consider the three crucial events in the life of a defect:

Defects are injected (accidentally) at some point by a programmer.

Later a person or an automated facility will detect that the error exists.

Sometime after detection, someone will endeavor to correct the defect.

Notice two intervals labeled a and b.

While some defects remain shallow and easy to correct, the time interval a on average seems to govern the time b on average.

Consider that the error is detected while the programmer is writing the code. The programmer backspaces and retypes the code and all is well.

Three things are working in the programmer’s favor:

-

The code is still clear in the programmer’s head

-

The changes that contain the defect are not mixed with other changes

-

There are no other defects that mask or confuse the error’s true nature

If you wait a few weeks then many things are working against the programmer

-

The change that includes the defect will be mixed with other changes

-

The defect may occur in a way that makes it appear that other code is at fault

-

Other defects or exception-handling code may mask the defect

-

The programmer’s memory of the code change has faded

-

The programmer making the correction may not be the original author

This is why the length of interval a tends to govern the length of interval b. It is important that defects are detected as soon as possible so that they may be more easily corrected.

There Are Many Tests

Many programmers have worked hard in statically-typed languages to ensure defects are caught by the compiler. Turning runtime defects into compile-time defects is a powerful technique that creates rapid, undeniable feedback.

Some languages even adopt a Design By Contract approach, such that code errors are detected by specific runtime assertions so that errors are uncovered more quickly.

Most of us have adopted some form of Test-Driven Development using microtests, and may have automated story tests (frequently called Behavior-Driven Development).

In addition to these low-level tests, we often have module-level (“contract”) tests, component acceptance (“intake”) testing, and automated acceptance testing.

We don’t stop there. Many organizations also have UI-level testing, along with penetration testing and performance testing.

That’s a lot of tests; therein lies our problem.

Tests Must Be Fast

Programmers are busy people and often are operating in pressurized circumstances.

We want to run the tests constantly as a habit, not a decision.

But what if running the tests takes a few minutes? Now programmers have to decide if they’ve done enough to merit running the tests.

If tests take several minutes, then programmers might run them once or twice in an hour instead of running them constantly.

If tests take long enough, programmers might run them less than once a day.

If tests take “too long”, programmers will not run them at all.

The period of ‘a’ increases, driving up the period of ‘b’.

Slow tests raise the cost of fixing defects.

Slow tests increase the likeliness that tests will be discovered late in the process when you don’t have enough recovery time to fix them all.

If programmers aren’t running the tests, they are less likely to write tests. After all, the test suite already takes too long to run, why make it worse? This increases the chance of bugs escaping into the wild.

What Can Be Done?

There are a number of strategies to use when addressing slow tests:

-

Isolate build time from test time

-

Get test timings

-

Get timings for test setup

Isolating Build Time helps to determine where to spend the time optimizing. If the build consumes 80% of the time, don’t bother fixing the tests. Instead, look at some of the parallel, distributed build servers or look at re-architecting the system.

If the tests consume 80% of the time, the time consumed by the build doesn’t much matter.

Get test timings because sometimes there are a handful of tests that take the lion’s share of time. There might be some integration tests hiding in among all your microtests. If you can get the list sorted by time, descending, you’ll have a good sense of where the time goes.

Perhaps you can replace slow tests with much faster tests. Quite often tests are slow because they’re written at a UI or API level but the code being tested is a simple function that can be covered through microtests instead.

If you can’t replace slow tests with faster tests (always worth a shot) then try segregating the slow tests from the fast tests.

If you segregate slow tests, have the build server run the microtests first, then the slower tests, then the slowest tests of all (usually integration and UI tests).

Measuring test setup time helps see the time-wasters that the test runner doesn’t capture. Generally, the test runner only captures the time from when the individual test begins until it ends.

If there is setup that happens before the test starts, that setup might take many times longer than the tests themselves and may run many times. Slow setup is a very common testing problem.

UI tests typically require a full instance of the app to be stood up, complete with all the supporting functions (DB, messaging, logging, etc). This pre-testing setup time isn’t counted by the test runner. I’ve seen some test suites spend 1/2 their time just creating a database and populating it with data. Check these things, and segregate out the tests that require a complete infrastructure (then see #2, above).

What do you do?

I hope this blog helps you to understand the importance of keeping test suites small and fast, and that the suggestions help you to drive down the cost of defects.

If you take a different approach to speeding up a slow test suite, I would love to hear your approach as well! Please drop a link to your write-up in the comments, below.