Iteration Vs Divergence

I’ve spent the past few years looking at the world through a lense of divergence, spotting all kinds of issues in software development related to communication and alignment, and I hope to share this new perspective with you.

It’s a Team Effort

Software development is a team sport. All the software you have seen was likely written, revised, edited, and approved by many people over time.

The “solo software genius” is a mythic trope but it almost never happens. Even in the rare event that an individual with a brilliant idea creates the first (naive, primitive) version of a product, they quickly try to draw other people into the project to support and improve it.

Software development occurs most often in a product community. There are makers, managers, promoters, salespeople, buyers, users, maintainers, installers, operators – a whole host of people who have a personal interest in the software.

Team Efforts Require Team Focus

If some number of people engage in a shared endeavor, then it is important for them to agree on goals and means.

Let’s explore this through metaphor:

We all want to commute to work safely, but this morning we find that a car has broken down in the middle of our street and is blocking traffic.

We intend to clear the vehicle from the road.

We approach with a shared vision (flowing traffic) and a clear mission (moving the car) in mind.

If we are all going to push a stalled car to the curb, then we should probably agree on which curb and whether we will get there by going forward or backward. Who will push and who will steer?

Without agreement on goals and means, we will find ourselves working at cross-purposes.

Imagine that you push the car forward while I push it backward, Joe turns the wheel to the right, thinking we were going to reverse to the left curb when we thought we were going forward to the right.

Soon we fall to arguing about our disagreements in the middle of the road, and nobody gets to work on time.

When teams fall apart, it’s often due to these types of misalignments.

We either don’t have a shared goal, a set of working agreements, a shared process, or a shared understanding.

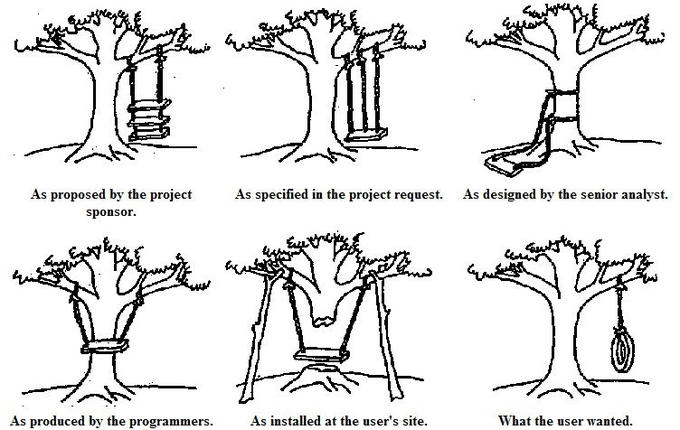

Requirement Divergence

In the car example, we may started with a common goal, but we all had different ideas of what the result should look like. There is a classic cartoon illustrating (tongue-in-cheek) the different intentions of people in a software context:

In this case, they have hilarious (and unworkable) divergent ideas about what the solution is, and possibly what the problem is. No matter how conscientious and hard-working the team members are, the divergent ideas of the goal and mission will wreck their collaboration.

The case isn’t generally like the cartoon, though. It generally isn’t that one person had the right idea that everyone else misunderstood in absurd ways.

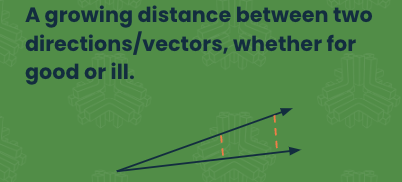

I offer this without blame. The problem isn’t that one person is wrong, but that the N different views aren’t coherent, there is a growing distance between the “vectors”, and an effort must be made to keep coherence among understanding between the different groups and individuals.

Practice Divergence

Take a look at the same situation where a newer version of a programming language is accepted at a company. Some people stay aligned with the practices and idioms that have been at play for years, and others try to adopt the new language features.

In this case, one group is tracking the market and the other is holding the line on the old way of working.

Pretty soon the problem isn’t that one group does work the new way (this is not wrong), nor that a group is doing work the old way (which is not wrong), but that the two groups are working in different ways but in the same code base.

The difference becomes the problem.

Agile Origin

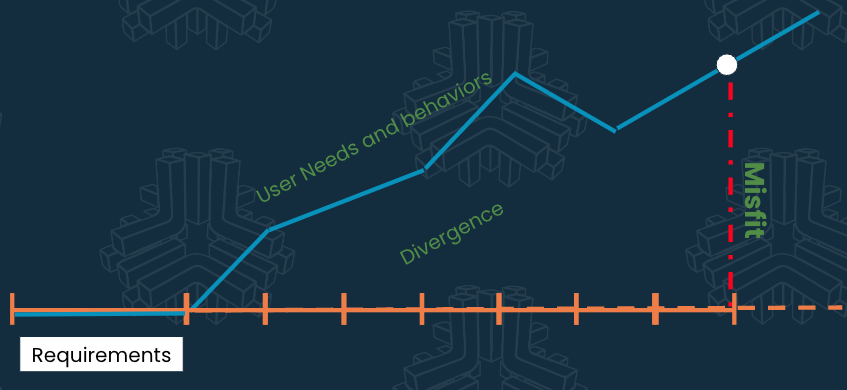

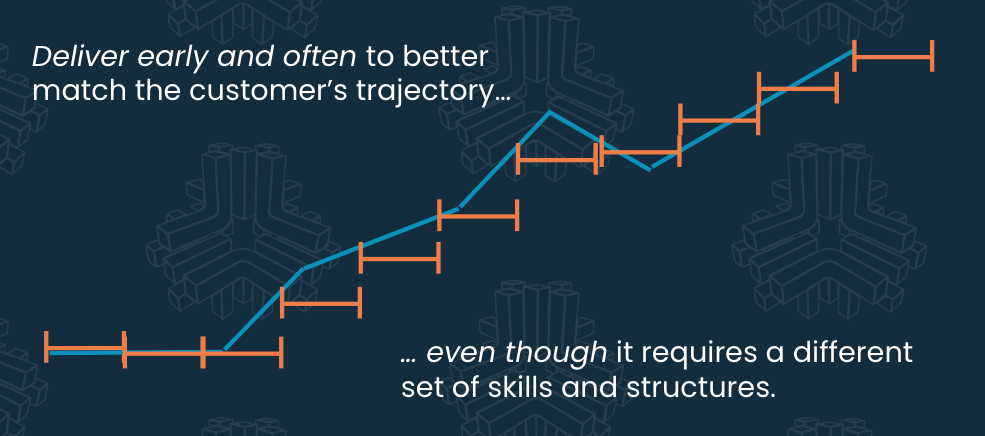

The original agile movement was a solution to the problem common in software development during the ’80s and ’90s, where the accepted process was to begin with user requirements, then systems requirements, then systems design, then detailed design, and only then did one start coding. When the coding was done, the official “testing phase” began.

Only when all of these “phases” were complete the software was delivered to customers.

If we had an error early in the design or analysis phase, we would end up writing the wrong software, and it would be many months (or several years) before we found out it was wrong.

We (including me, personally) put an awful lot of effort into being right to begin with. Our analysts and designers were very professional and ran a tight ship. We had copious amounts of documentation to support our decisions and communicate them to coders and testers.

However, no matter how well we studied the users’ needs, a few years is a very long time. We were likely to deliver the code that the user needed years ago, instead of the code that they need today.

When the misfit of our solution was great enough, the project failed and the contract was canceled. If the contract was very large, then it tended to produce a lot of press coverage and accusations.

What happened? The requirements were right. They accurately captured user needs. The solution was approved by the client.

However, while the requirements and designs were frozen, the client’s business and the whole realm of their business were not. To meet their needs on the day of delivery, they needed different software.

While the development effort held true to the original plan, that plan had remained static in a changing world.

Force the Users?

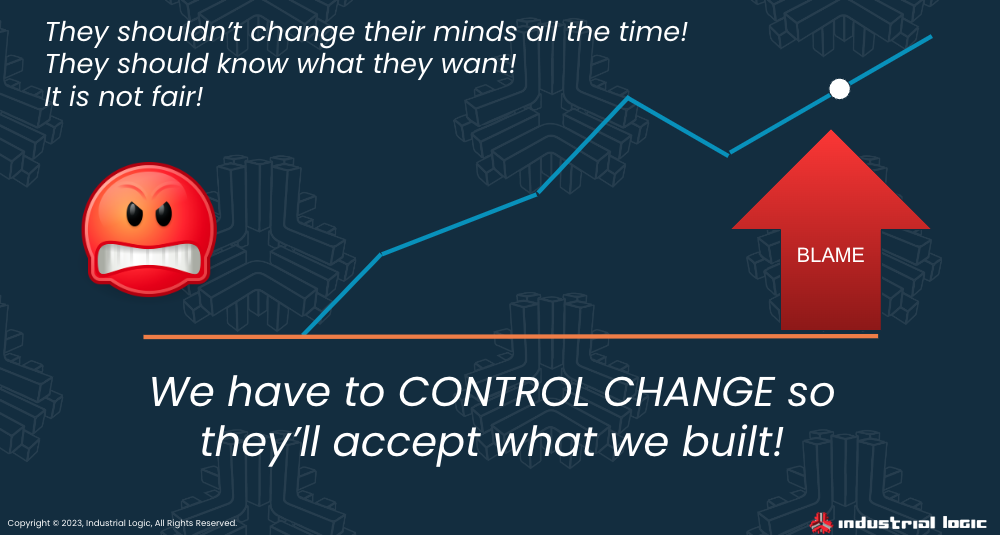

The software industry had a lot of power (how would they get any software without us?) and we disproved of these changes. We instituted change control boards to fight against “scope creep” so that we could build the software that we had initially envisioned instead of constantly having to re-align to the way the business worked.

Sadly, forcing the users to keep their needs constant isn’t a workable solution.

We can argue that they shouldn’t have “changed their minds” but keeping to the original plan could have had them diverging from their customers’ needs - a potentially untenable situation for their business!

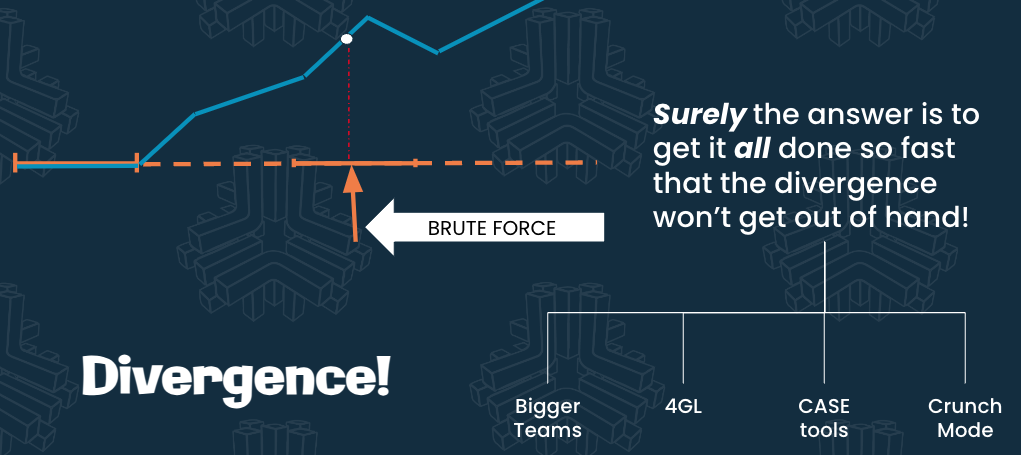

By the mid-1990s, we had come to some understanding of divergence. We realized that divergence happened over time, so we just needed to produce our designed solution fast while the customers still had the problem we were solving.

Force the Makers?

If we can’t force the users to “hold the line” on requirements, we can force the development team to hurry in the hope that we can deliver the system before the customer needs to change too much.

The “easy” answer to deliver sooner (if you have the funds) is brute force.

Brute force solutions included having many more developers (I worked on a “team” of over 200 developers once), using simpler programming languages, using code-generating CASE tools, and working excessive overtime (I worked several months of 100-hour weeks).

The past brute-force solutions didn’t work very well, but about 30% of the time they did complete the work eventually, so the software was finally delivered and was still somewhat useful.

The fact that they produced a result sometimes gave the industry hope!

Surely, all we have to do is force it better and we could grow our percentage of success!

There is a lot of brute force in the world and little evidence that it is actually helpful.

I have concerns now that business is “banging the AI gong” as a way to brute-force solution development via armies of powerful code-generating computers. It reminds me of the 4GL and CASE tool that has failed us until now. I guess we’ll see how that works out.

A Radical Alternative

Some wild thinkers came up with some new ideas about software in the 1990s.

They used terms like “radical” and “extreme” to describe their work. Rapid Application Development and Lightweight Methods were such a departure from “proper” software engineering that it was obvious to the mainstream developers that it could never work.

The radical thinkers suggested that software analysis and design outputs weren’t sacred.

Rather than stick with a big, up-front plan, they would deliver tiny bursts of software functionality much more frequently, and then change the plan (gasp!) in order to stay in step with customer needs.

Spoiler alert: it worked. It still works. Sometimes it is the only thing that works.

This approach requires a lot of change for developers, and even more for managers, designers, and analysts.

It definitely has big advantages, in that you don’t fail due to divergence. According to some studies, I’ve seen that this technique can give 29% more successes and 12 fewer “challenged” projects than any prior techniques.

“29% better” isn’t “100% guaranteed to work”, but it’s a significant step in the right direction.

This is a different way of doing things.

You have to be willing to plan loosely and adjust or abandon plans. Because change is likely, you have to find out for yourself what a reasonable planning horizon looks like in your domain and with your customers.

If you invest in analysis, design, and planning like we did in waterfall days then you’d be constantly going back to the drawing board. Planning a whole project that way and then releasing it in increments won’t even help a little.

You have to make short-term plans, but also you have to build highly maintainable and simple software so that you will be able to change it easily in unexpected ways.

As much as you don’t want to over-invest in analysis and design, you also can’t afford to over-invest in a technical direction you think might become important someday. You have to leave room to find out later.

This way of working is dependent on feedback.

This is why the XP teams had customers on the development team - they needed the fastest feedback possible to keep from over-planning, under-planning, or mistakenly planning on features that aren’t really needed.

One would think that was clearly spelled out when Agile Software Development was launched with the Manifesto for Agile Software Development, but it seems to be lost on about ½ the audience.

Using incremental (adding on features) and iterative (revisiting the design to incorporate the new features gracefully) design (AKA “Evolutionary Design”) we can keep up better with the changes in the world around us. That’s significant.

But what about the changes within the software community?

Other Divergence

It is not just the users and customers who change.

There is a lot of divergence in a software ecosystem, which is why it is hard to scale software development. If you can’t keep alignment in the midst of quick changes, you will have the “blind men and the elephant” problem - each person will have an entirely different idea of what system they’re building.

Let’s look at a few examples.

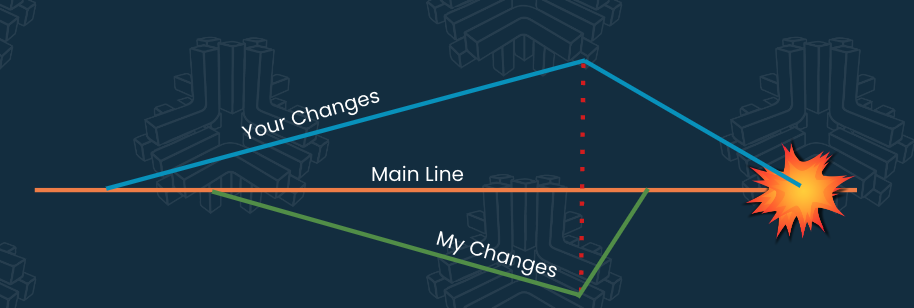

Branch Divergence

When multiple people are working in the same software system, they sometimes make changes that won’t fit together cleanly, causing integration issues and unintended system effects.

That kind of divergence is more likely if people keep their changes separated and private for a long time.

How long a time? Maybe days, maybe hours. It depends on your situation.

Integrating smaller changes more often (c.f. CI and TBD) helps to keep big divergences from appearing, or at least ensures you catch them sooner.

If you are sharing your changes several times a day, you can’t get nearly as far out of sync without noticing.

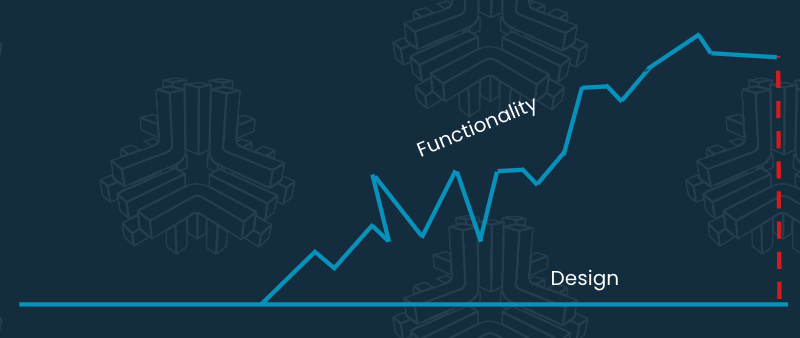

Design vs Functionality

When you are incrementally adding features, the actual behavior of the system starts to diverge from the original design. Of course, the current functionality ought to dictate the design (“form follows function”) so preserving the original (naive, primitive) design ensures that we’ll have problems supporting new features.

If there isn’t frequent reiteration of the design, then the old design no longer supports the new behaviors.

Eventually, it may seem that everything the system is doing is an_ exception to a design_ that is still present even though it isn’t valid anymore.

We revisit the design (refactor) to keep the design in step with the functionality of the system. It is a way of combatting divergence.

In the old way of working, that would be seen as “rework” and we would try to minimize or eliminate changes to the original plan of the software.

In the new way of working, it is an intentional feature of the project, so it is an “evolution of the design” and absolutely intentional and accepted.

Ward Cunningham said that “when we gather understanding, we return to the code base and change the code so that it looks like we knew what we were doing, to begin with, and that it had been easy to do.”

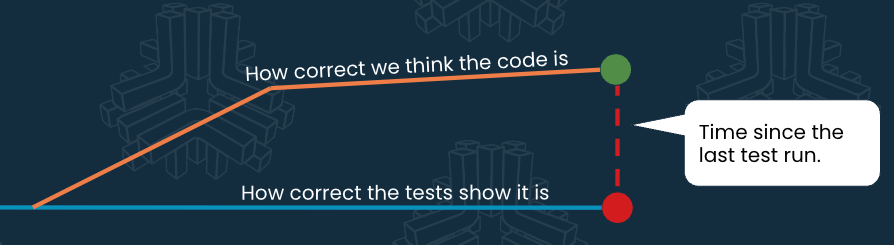

Accuracy Drift

Why run all the tests all the time? What’s the point of continuous testing?

It’s because we sometimes believe that the code is in better condition than it really is.

We feel confident that we’ve made good changes and we’ve thought them through, but we may not be as correct about the whole system as we’d like.

Most programmers only know 5-15% of the code base of the system they work in. It’s very easy to make a local change that seems fine, but which causes problems elsewhere in the system.

We need to have a lot of high-speed, high-confidence tests, and we need to run them very frequently indeed!

We don’t want to find out that we’d made a crucial mistake 76 changes ago! It’s easier if we know ASAP before we compound errors.

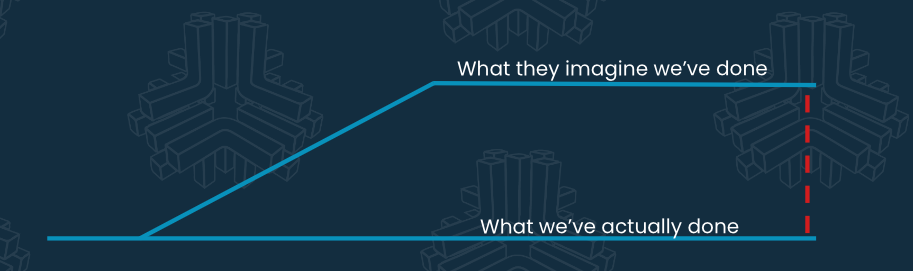

Status Divergence

Why do we make our work available to our sponsors continuously (c.f. Continuous Deployment or Continuous Delivery)?

Divergent notions of progress can cause a lot of frustration and poor decision-making in a team.

The need for feedback on progress and content requires us to show working code that has the current features enabled and running.

An agile mantra is “working software is the primary measure of progress.”

If some feature isn’t enabled and working in the application, then it’s not really done (even if the team claims it’s “code complete”).

Running, tested, working code is more reliable than a status report; managers and stakeholders feel less suspicious when they can run the software themselves.

Knowledge Divergence

Why do developers think they need to keep up with developments in the software industry?

If we aren’t careful, we could all be writing obsolete code that runs only on obsolete hardware or browsers. It’s surprisingly easy, and people find themselves in this situation often enough.

Not only would that limit our product’s market, but also our job prospects.

It also means that we haven’t learned a whole host of things that might make it easier for us to build successful features.

Maybe they’re still hard-coding counted for loops when they could be using comprehensions, streams, and set operations. These are small things that can make writing new code safer and easier, but not everyone has kept up with current idioms.

Maybe they could be using better HTML writing tools, better CSS features, better security scanners and type checkers. Perhaps entire swaths of the current system can be replaced now with commodity services.

Maybe they’re testing their code on a mis-configured system that masks serious problems.

If we don’t know, we don’t know. We’re left doing hard things by hand instead of leveraging all the easy things that are now available.

Sometimes a less enlightened manager will be fed up with “losing time” to “technical tail-chasing” and will opine that the programmers “should already know how to program before we hire them so we don’t waste time and money training them!”

I’ve also heard people complaining that “some team of 4 people in their garage did a system as complicated as this one (and even nicer) in a few months!”

The difference? The small, fast team leveraged the modern languages, tools, editors, servers, and services available to them.

Your teams may be still trying to update a system from 1990s technology to a 2000-era technology base, and facing managerial as well as technical resistance all along the way.

While I feel the frustration and sympathize, one must remember that a large percentage of the things we do every day didn’t exist 5 years ago. Nobody learned everything they will someday need to know in university.

If we want our people to accomplish modern results, we have to see to their modernization.

The search for better ways has to include better technology as well as techniques.

Conclusion For Now

There is so much more to learn through our lens of divergence, and hopefully, you will be able to see the world this way. It will frustrate you but also lead to great breakthroughs in your product community if you work to control unwanted divergence with frequent adjustments (iterations).

Some questions to consider:

- Why do frameworks insist that we use cross-functional teams?

- How often should we check for divergence and make corrections in our domain?

- Should we get developers and customers more closely involved together?

- What is the justification for having people do solo assignments?

- Is our current policy for branching and Pull Requests working for or against us?

- What is the advantage of Continuous Deployment?

- How can we tell if management and developers aren’t pursuing the same goals?

- Do our incentives focus on planned activity too much and drive divergence?

- Why do so many coaches argue against separating bug-fixing and code-writing teams?

- What other kinds of divergence do we suffer with daily?